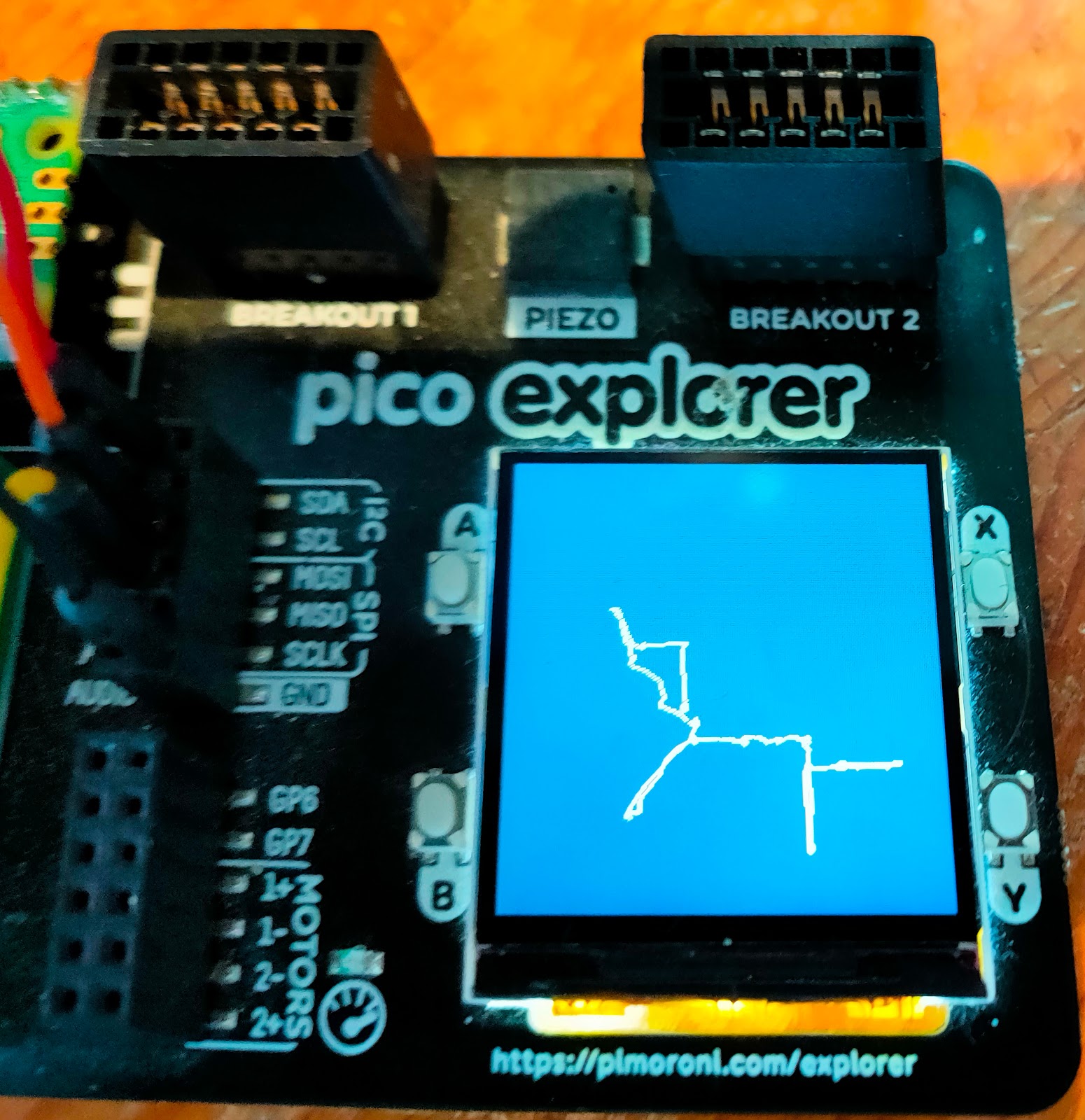

The Pimoroni Presto - prefect form, fantastic features

I've just unboxed a Pimoroni Presto and it is the most beautiful hardware I've ever seen. The front is clean and lean; the display is crisp with lots of room. On the right is a low-res image of the screen; the full res display is even sharper. It's perfect for MARPLE, my current project. I'm building an APL interpreter and development environment. MARPLE runs in workstations, but to my surprise and delight it also runs well on the Raspberry Pi Pico2, the chip that powers the Presto. The back of the Presto is packed with features; an SD card slot, two QW/ST ports for I2C connection, a piezo buzzer, a battery connector, reset and boot buttons and backlighting LEDs. There are extra accessories in the starter kit, but I've work to do before I get to use them. I've got my APL character set working, but tomorrow I'll start work on installing the interpreter. I need the SD card slot for saving code, and I want to add an interface to I2C. The Presto is exactly wh...